Dagstuhl Seminar 19432

Analysis of Autonomous Mobile Collectives in Complex Physical Environments

( Oct 20 – Oct 23, 2019 )

Permalink

Organizers

- Mario Gleirscher (University of York, GB)

- Anne E. Haxthausen (Technical University of Denmark - Lyngby, DK)

- Martin Leucker (Universität Lübeck, DE)

- Sven Linker (University of Liverpool, GB)

Contact

- Andreas Dolzmann (for scientific matters)

- Susanne Bach-Bernhard (for administrative matters)

Schedule

In this Dagstuhl Seminar, we seek to examine how individual autonomous machines (e.g. vehicles or robots of any kind) and collectives thereof have to behave in complex physical environments such that acceptable safety and performance guarantees can be verifiably met. Machines, collectives, and the environment are subjected to uncertainty (e.g. changes, defects) and risk. Furthermore, complex environments involve interactions with human-controlled machines, humans, animals, and valuable assets not to be harmed or damaged.

To provide strong guarantees for autonomous mobile collectives in such environments, we have to model them at an appropriate level of abstraction, validate these models, verify desirable properties of these models, and, most importantly, certify these properties of any implementations of these models. A few weeks before the seminar, we will provide all participants with an application challenge, and encourage the researchers to model it, or parts of it, in their formalism of choice. Our aim is to discuss these models, to compare the demonstrated approaches, and to identify commonalities and synergies. During the seminar, the aim is to tightly interact with engineering practitioners. Practitioners will be encouraged to evaluate the crafted models and solutions based on their expertise of the application domain.

This approach will foster sustainable interaction of software and control engineering researchers using continuous models, uncertainty models, and coordination and communication models to reason about autonomous mobile collectives. The aim of the tight interaction with practitioners is to enhance relevant approaches for successful transfer into the engineering practice of autonomous mobile vehicles.

Mario Gleirscher, Anne E. Haxthausen, Martin Leucker, and Sven Linker

Mario Gleirscher, Anne E. Haxthausen, Martin Leucker, and Sven Linker

Motivation

Autonomous vehicles (AVs) are facing strong proof obligations. Individual AVs can be part of a collective (e.g. a platoon of utility vehicles on a farm field, a truck convoy on a highway, a convoy of passenger vehicles on urban road, an in-door aerial platoon, a railway convoy) and act within a heterogeneous environment of other collectives, for example, pedestrians, bicyclists, and motorcyclists. Multiple AVs might have to correctly and reliably negotiate their order of passing a crossing or reliably and robustly arrange in a certain work layout on agricultural land. Individuals and collectives in such environments, whether controlled in a centralised or distributed way, are subjected to change, uncertainty, and defects. Moreover, complex environments typically deny a comprehensive segregation of physical space and, hence, involve interactions with entities out of control (e.g. human-controlled machines, pedestrians, animals) and mostly also out of sight of an individual machine’s (short-range) sensors.

Obejctive

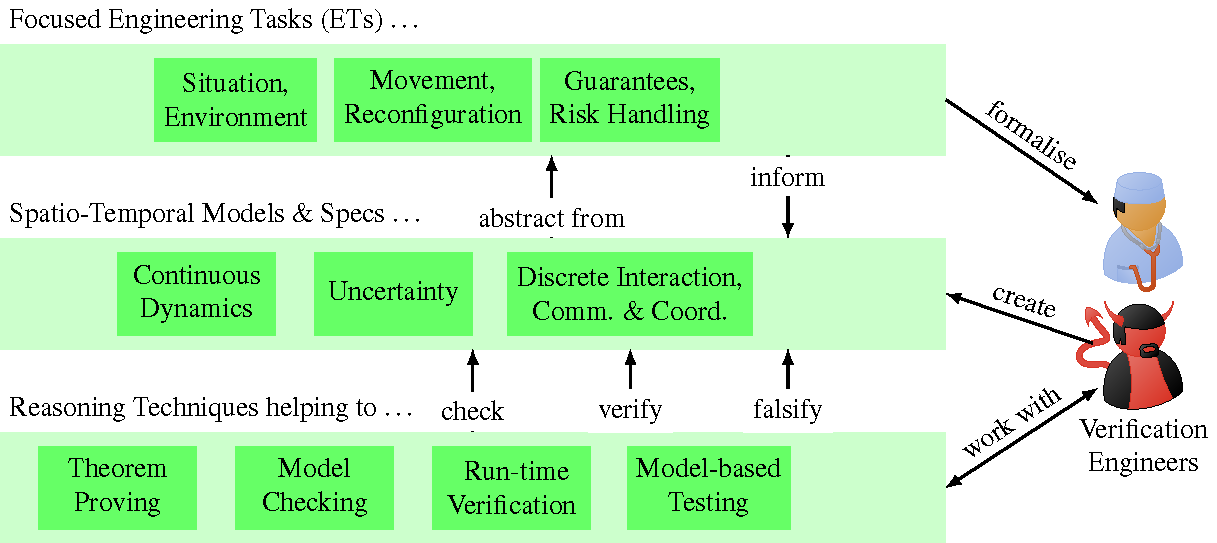

This seminar was centred around an application challenge, the Smart Farm. Participants were encouraged to discuss how their research addresses typical engineering tasks (ETs; upper layer in Fig. 1) to be accomplished for the given challenge or for similar challenges. These tasks include

- the identification, modelling, and analysis of operational situations in complex environments

- real-time coordination, composition, and reconfiguration of machine collectives with a focus on (i) interaction with human-operated systems, humans, animals, infrastructure and (ii) situation-specific centralised or distributed control regimes

- the determination of strongest safety and performance guarantees with a focus on (i) the estimation of upper resilience bounds of machine collectives and lower reliability bounds of individual machines and (ii) the determination of strongest guarantees under partial state knowledge, with minimal infrastructural support, and under reduced controllability.

In the discussions of how the ETs can be accomplished best, we also aimed at investigating abstractions of defects and uncertainties, for example:

- controller, communication, and infrastructure failures (e.g. erroneous vehicle-to-X connection and communication, deficient road infrastructure),

- undesired interference or disturbance of autonomous operation (e.g. malicious and unintended misuse; controller, communication, and infrastructure attacks),

- practical sensor uncertainties, actuator perturbations, and partial state knowledge.

Defects and uncertainties are crucial for constructing realistic models of the behavioural spectrum of mobile collectives and yet abstract enough to perform practical reasoning. Likewise, such models allow the necessary freedom to express ideal and actual behaviour, independent of whether such behaviour is desirable. This freedom can involve the use of non-deterministic models. In any case, a (property) specification would label some of the observable behaviours as desirable, some as undesirable, others somewhere in between (cf. quantitative verification). The more complete and precise such a specification, the better the distinction between correct, undesirable, and other classes of behaviours of a collective.

Our overall objective with this seminar was to gain a common understanding of acceptable safety and performance of autonomous mobile collectives in presence of defects and other uncertainties typically occurring in complex open environments. The overarching approach of all seminar contributions was the formal analysis and verification of behavioural correctness under these assumptions (lower layer in Fig. 1) by using techniques such as, e.g. theorem proving, model checking, run-time verification, and model-based testing. Our central assumption for this seminar was that the given application challenge or any similar challenges render individual methods for the analysis and verification of such systems insufficient. For example, in control-theoretic models such collectives are modelled by differential equations. Interaction within and among collectives and with their environment, governing these equations, cannot be easily encoded. Approaches that express such interactions well, however, typically struggle with the detailed description of the physical laws the AVs need to adhere to. Hence, for ensuring correct behaviour in such a setting, layered abstractions, corresponding models, and specialised reasoning techniques have to be combined.

Organisation

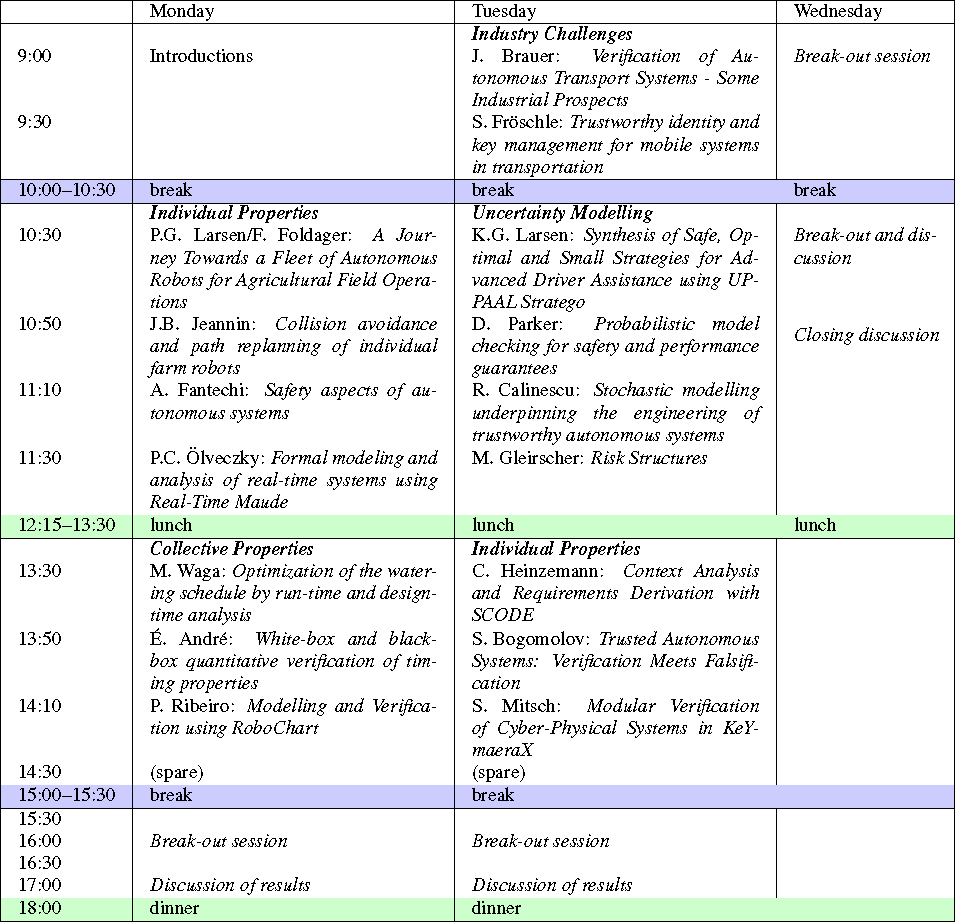

Before the seminar, we provided each participant with material about the application challenge (see Section 4.1) together with list of engineering tasks and research questions. We encouraged the participants to apply their approach, if available, to at least one of the ETs of the application challenge and to answer at least one of the research questions. Alternatively, participants were invited to present any research and practical experiences related to the seminar topic and the challenge. Everyone was given the opportunity to give a full-length talk. Table 1 shows the seminar structure, the talks, and further sessions. After the welcome session, participants introduced themselves to the group. The rest of the seminar was organised into talk sessions and break-out sessions.

In the talk sessions, we investigated several research questions from different angles. We had talks about (1) industry challenges, (2) the analysis and verification of properties of individual autonomous vehicles (two sessions), (3) the analysis and verification of properties of autonomous collectives, and (4) the modelling of uncertainty for the (quantitative) property verification of critical autonomous systems. Nine talks dealt with an introduction of a specific verification approach suitable for tackling an aspect of the application challenge, including a summary of the state-of-the-art of this approach. Four talks were about industrial examples of a nature similar to the Smart Farm, highlighting technical challenges, encountered issues, and perceived practical obstacles. Five talks focused on the application of a particular approach to a particular aspect of the Smart Farm, addressing some of the research questions.

In the following, we list the main questions and the participants whose talks highlighted a particular aspect of the corresponding question. For more details, see the list of talk abstracts below.

- How can each ET be solved? How can we achieve safety in presence of distribution, mobility, and uncertainty? Which mechanisms fit best to ensure safety in the application challenge?

Frederik Foldager and Peter Gorm Larsen - How do we model the systems and verify safety and progress properties? Can we always find acceptable Pareto optima over safety and performance, at traffic level, at the level of a collective, and for individual machines?

Étienne André, Sergiy Bogomolov, Kim Larsen, David Parker - How can we exploit the structure of practical AVs and collectives to craft specific verification techniques (e.g. prevent state space explosion, identify fundamental theorems)?

Stefan Mitsch, Pedro Ribeiro - Which benefits do we gain from integrating design-time verification, model-based testing, and run-time verification?

Mario Gleirscher, Masaki Waga - How can verification techniques be incorporated into the development process of AVs?

Jörg Brauer, Radu Calinescu, Alessandro Fantechi, Peter Csaba Ölvecky - Which complications arise from the verification of AVs and how can we mitigate the impact of these complications, particularly, during practical verification?

Sibylle Fröschle, Christian Heinzemann

Break-Out Sessions

To stimulate interaction, we created break-out groups on each seminar day and on the following topics: challenges of verifying autonomous collectives, the challenge of uncertainty (using, e.g. quantitative verification, parametric model checking), abstractions of space & uncertainty, the impact of IT security issues on AV safety, and safe platooning. Additionally, several smaller groups (sometimes consisting of only two participants) met to discuss combinations and extensions of the topics they presented in their respective talks.

One break-out group focused on creating a big picture of the challenges of verifying autonomous mobile collectives in the Smart Farm. The identified problems include

- estimation of behavioural properties (e.g. exact arrival times of agents, dead-lock freedom of the plan), real-time interleaving of sensing and control, and finding the "sweet spot" between precision and performance when used at run-time,

- model checking at scale, when to use online or offline analysis for verification and synthesis (e.g. synthesis of distributed safety controllers for automatic repair/fallback),

- useful architectural abstractions, compositionality, and refinement (e.g. how to safely partition the tasks of a mission between system components or whole robots?),

- security of communication and robustness of control to communication glitches (e.g. how to integrate a jamming model into overall system verification?),

- languages/models for dealing with system failures (e.g. how to cope with failures of individual autonomous vehicles in the context of a collective?) and component failures (e.g. how to safely integrate machine learning into autonomous systems?), and

- safety in the presence of uncertainty (e.g. how to quantify uncertainty?, how to deal with uncertainty in parameters and in the structure of the system and the environment?).

Another group investigated the challenge of uncertainty in modelling, discussing how uncertainty (e.g. due to partial observability) can be dealt with in automated verification and how techniques such as quantitative verification can be used to solve verification problems with uncertainties in the considered parameters. Depending on the Smart Farm aspect to be tackled, state-of-the-art approaches include the use of interval abstractions for parameters, the calculation of confidence intervals for verification results, and the use of counterexample-guided abstraction refinement.

The break-out session on space and uncertainty stretched over all three days, and was concerned with the possible ways to specify spatial aspects, as well as how to incorporate uncertainty into such specifications. Our discussion proceeded on different topics. We discussed, which types of sensors allow robotic systems to gain spatial knowledge, and what levels of uncertainty can be expected. Based on this, we examined whether several layers of space are necessary and beneficial to specify both the systems and their desired properties (e.g., a discrete layer for planning high-level actions and a continuous layer, on which more local properties are ensured by controllers, as for example obstacle avoidance). Furthermore, we compared the different types of uncertainty, the level of spatial layers they occur on, and their impact on systems in the Smart Farm. This included a discussion of how much knowledge needs to be globally available, and what can be kept locally at the level of each individual entity. We realised that while the modelling scenario allowed for different levels of space and uncertainty, it was not easy and straightforward to identify necessary and interesting spatial properties to analyse. Hence, we agreed that the case study needs to allow for more degrees of freedom (e.g., different routes to reach physical targets, to permit several alternative plans).

The session about IT security of farm collectives focused on the aspect of communication security. First, the group identified the typical communication requirements between the actors of a smart farm such as: between a robot and a supervisory control (perhaps including a drone), between two robots that carry out a task on the same field (e.g. to carry out the task cooperatively or for collision avoidance), between a sensor and a control centre (e.g. for watering). Altogether, it became clear that the operation of a smart farm critically depends on the secure and timely communication between the various actors. It is also clear that in the setting of the smart farm the actors must communicate over wireless channels. Hence, the usual threats against communication over an open medium apply, e.g. message spoofing and manipulation, eavesdropping and jamming. On the one hand, this requires us to employ appropriate security protocols and key management, which can guarantee origin and message authenticity as well as confidentiality. On the other hand, this requires further measures against availability attacks such as jamming. The group focused on the threat of jamming. While jamming cannot be prevented in an open system the general idea was to take a 'detect and mitigate' approach. For example, jamming can be detected by the absence of regular 'heartbeat' signals and by combination with visual channels. Mitigation strategies involve raising an alarm and removing the jamming device in a timely fashion while ensuring the system is not overly susceptible to false positives and denial-of-service attacks. Neither detection nor mitigation seemed trivial when discussed in detail. On the positive side, the verification methods and tools presented at the seminar could be used to evaluate possible strategies, and perhaps, even to synthesise them. Later on the group joined the break-out group on platooning, where ommunication is particularly critical.

In the break-out session on safe platooning on the farm, we discussed

- the handling of planned events being part of the normal operation of a platoon (e.g. several farm vehicles, lorries and harvesters, form a platoon including leader election; a lorry wants to join or leave a harvesting platoon; a platoon with two consecutive lorries needs to be rearranged; a lorry decides to leave the platoon) and

- the detection of critical (not necessarily undesired) events to be dealt with or to recover from during normal operation (e.g. a foreign vehicle, a farmer's car, enters the platoon area; communication error because of a jamming attack or a hardware failure disturbs the platoon controller; the current leader looses trustworthiness, e.g. because of being hacked, by deviating from the common goal of the platoon; farm workers enter the working area of the platoon).

Our expectations for this first seminar were modest. We wanted to learn from each others' perspectives, to discuss available approaches, and to identify the hardest and most relevant open challenges.

Our discussions opened paths to an integration and application of the presented theories and models (middle layer in Fig. 1), particularly, continuous models (e.g. timed and hybrid automata), uncertainty models (e.g. Markov chains, probabilistic automata), communication and coordination models (e.g. timed process algebra). We investigated the use of such models in the context of various reasoning techniques (e.g.theorem proving, model checking, run-time verification, model-based testing). These discussions lay a basis for the derivation of guidelines on how the approaches, when applied to systems such as the Smart Farm, can be combined and/or enhanced to tackle the identified problems in practical contexts subject to certification efforts.

The attendees were from various fields such as formal verification, testing, certification, mechanical and control engineering, and embedded IT security, working at universities, in industry-oriented research institutes, or directly in industry. In this setting, we were able to share experiences and insights from various application domains (e.g. smart farming, smart energy systems, train/railway systems, automotive and transportation), to discuss issues of the Smart Farm scenario, and to examine potential research directions. Particularly, we observe that commonalities among the used approaches give rise to an integrated and more versatile approach. Our participants from industry receive the opportunity to convert any of these insights into lasting process improvements in their safety-critical domains. We expect our findings to be relevant to regulatory authorities in these domains.

In overall, we believe this seminar was an important step to foster collaboration of researchers and practitioners experienced with the different models and reasoning techniques, and to initiate a research community focusing on autonomous collectives of similar or even higher complexity than the Smart Farm. To that end, we are planning further meetings of the seminar's participants in the near future, to allow for further refinement of the models, and combinations of the methods presented. Additionally, we will further improve and extend the modelling scenario, so that a particular combination of specification and verification approaches can be explored in more detail. Eventually, we intend to collect our findings possibly in a special issue of a suitable journal.

Funding and Acknowledgements.

Sven Linker was supported by the Engineering and Physical Sciences Research Council programme grant EP/N007565/1 (S4: Science of Sensor Systems Software). Mario Gleirscher was supported by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) under the Grant no. 381212925. We are grateful to Sibylle Fröschle for summarising the results of the IT security session. Further thanks go to Frederik Foldager for collecting and compiling the abstracts. We would like to spend sincere gratitude to all participants for their contributions and for their support and active engagement in making this seminar an insightful experience.

Mario Gleirscher, Anne E. Haxthausen, Martin Leucker, and Sven Linker

Mario Gleirscher, Anne E. Haxthausen, Martin Leucker, and Sven Linker

- Étienne André (University of Paris North, FR) [dblp]

- Andreas Bauer (KUKA Systems GmbH - Augsburg, DE) [dblp]

- Sergiy Bogomolov (Newcastle University, GB) [dblp]

- Jörg Brauer (Verified Systems International GmbH - Bremen, DE) [dblp]

- Radu Calinescu (University of York, GB) [dblp]

- Alessandro Fantechi (University of Florence, IT) [dblp]

- Frederik Foldager (Aarhus University, DK) [dblp]

- Sibylle Fröschle (OFFIS - Oldenburg, DE) [dblp]

- Mario Gleirscher (University of York, GB) [dblp]

- Anne E. Haxthausen (Technical University of Denmark - Lyngby, DK) [dblp]

- Christian Heinzemann (Robert Bosch GmbH - Stuttgart, DE) [dblp]

- Jean-Baptiste Jeannin (University of Michigan - Ann Arbor, US) [dblp]

- Kim Guldstrand Larsen (Aalborg University, DK) [dblp]

- Peter Gorm Larsen (Aarhus University, DK) [dblp]

- Martin Leucker (Universität Lübeck, DE) [dblp]

- Sven Linker (University of Liverpool, GB) [dblp]

- Stefan Mitsch (Carnegie Mellon University - Pittsburgh, US) [dblp]

- Laura Nenzi (University of Trieste, IT) [dblp]

- Peter Csaba Ölveczky (University of Oslo, NO) [dblp]

- David Parker (University of Birmingham, GB) [dblp]

- Pedro Ribeiro (University of York, GB) [dblp]

- Masaki Waga (National Institute of Informatics - Tokyo, JP) [dblp]

Related Seminars

Classification

- artificial intelligence / robotics

- modelling / simulation

- verification / logic

Keywords

- autonomous collectives

- formal verification

- hybrid systems

- control engineering

- uncertainty and risk

Creative Commons BY 3.0 DE

Creative Commons BY 3.0 DE